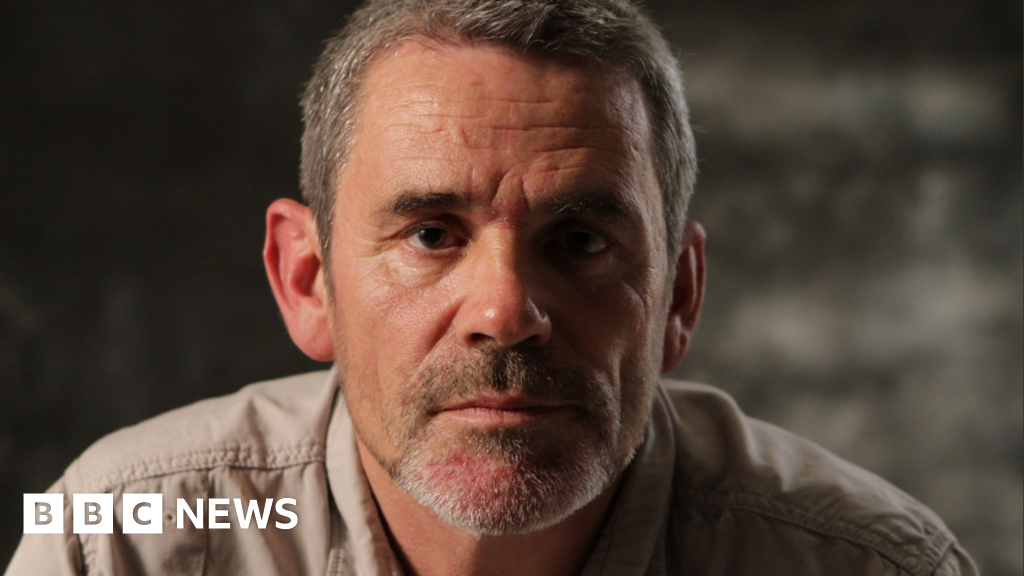

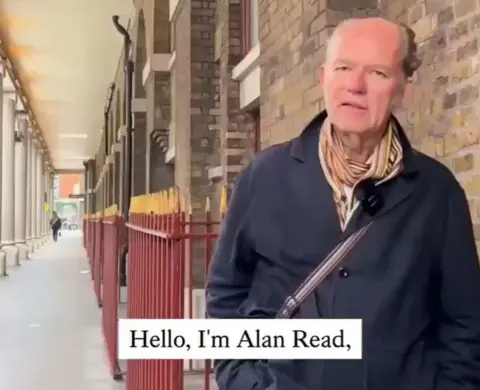

King’s College London professor Alan Read, a seasoned theatre academic with no political affiliations, initially paid little attention to the occasional deepfakes that flickered across his social media feeds. He would sometimes report them, other times simply scroll past, accustomed to the ephemeral nature of online content. That changed abruptly one day when an obscure account tagged him in a video featuring his own face, a startling intrusion into his digital life. The video presented a synthetic voice, eerily similar to his own, delivering a politically charged diatribe against French President Emmanuel Macron and other Western leaders, painting them as being "aboard the Titanic which has ‘European Union’ written on its hull." "Almost everything in that video is egregious, and awful to listen to," Dr. Read confided to the BBC, expressing his profound disconnect from the content. "It strikes me as… utterly alien to me."

This unsettling appearance of Dr. Read’s likeness marked his unwitting entry into a new wave of Russia-linked synthetic videos that have proliferated across social media platforms in recent months. This alarming development has raised significant concerns among security experts, signaling a crucial need for the West to bolster its defenses against the Kremlin’s escalating influence on the artificial intelligence front. Chris Kremidas-Courtney, a defense and security analyst at the European Policy Centre think tank, observed a fundamental shift in influence operations: "What we’re seeing is not just a spike in deepfakes but a shift in how influence is produced. We face systems that can generate… persuasion at scale, for pennies. To me, this is a revolution in political influence, and none of our current governance schemes are ready to address it." These videos, some amassing hundreds of thousands of views, aim to discredit EU institutions and cast aspersions on the Kyiv government’s integrity, particularly as Ukraine seeks vital funding from Western partners to sustain its defense against Russia’s full-scale invasion, now entering its fifth year.

The recent surge in sophisticated AI-generated disinformation follows closely on the heels of advancements in generative AI technology. Specifically, the release of OpenAI’s Sora2, the latest iteration of its video-generating software, has demonstrably improved the realism and coherence of synthetic media. This leap in capability has fueled a competitive landscape where numerous AI tools are vying for market share. Many of these secondary applications are attracting users by significantly reducing prices or even circumventing crucial safety measures, such as the watermarks that Sora2 uses to distinguish its generated content from authentic footage. "They need to draw in users," explained Russian AI expert Arman Tuganbaev, highlighting that while leading platforms like OpenAI are actively working to prevent the creation of videos depicting specific individuals, "second-tier apps will give you that option." OpenAI has stated its commitment to taking action against accounts engaging in deceptive activities intended to cause harm, including falsely claiming the origin of content. This technological arms race has directly contributed to a steady increase in both the volume and sophistication of foreign influence campaigns, thereby strengthening Russia’s strategic advantage in its ongoing hybrid conflict with the West.

The impact of these AI-powered campaigns is already being felt across Europe. In late December, a series of AI-generated videos went viral on TikTok, featuring young Polish women ostensibly calling for "Polexit," or Poland’s withdrawal from the European Union. Adam Szlapka, Poland’s government spokesman, unequivocally stated, "There is no doubt that this is Russian disinformation. If someone looks closely, they can spot Russian syntax in these videos." In response to this incident, Poland formally requested the European Commission to investigate TikTok. The platform, which has since removed the offending clips and the associated accounts, reported that it had taken down over 75 covert influence operations globally in 2025 alone.

The threat is not confined to continental Europe. In the United Kingdom, Members of Parliament have expressed deep concern that Russian deepfakes could be deployed to influence local elections scheduled for May. Vijay Rangarajan, the chief executive of the UK Electoral Commission, warned lawmakers, "We have seen them used extensively in elections around the world, so there is no reason to assume Britain would be an exception." However, the UK’s Online Safety Act, while compelling platforms to remove material proven to be foreign influence operations, does not explicitly classify disinformation as a harm. This regulatory gap poses a significant challenge, as the process of verifying and removing such content often proves too slow in the fast-paced online environment, where viral content can spread exponentially within hours.

Identifying the precise origin of these disinformation campaigns remains a formidable task. Nevertheless, Western researchers have consistently identified common traits across many of these operations, including stylistic cues and distribution patterns, that strongly link them to organized disinformation units aligned with the Kremlin. One such campaign, reportedly dubbed "Matryoshka" (referencing Russian nesting dolls) or "Operation Overload," is believed to have orchestrated a significant wave of synthetic videos aimed at discrediting Moldova’s president, Maia Sandu, during her 2025 election bid. NewsGuard, an organization dedicated to tracking online disinformation, has noted recurring patterns suggesting that the same network was likely responsible for the video featuring Dr. Read. The campaign’s name itself—"matryoshkas"—aptly reflects its modus operandi: encasing an initial false narrative within layers of reposts from old or compromised social media accounts, thereby obscuring its true source.

This tactic of leveraging sophisticated AI-generated content for disinformation campaigns offers a distinct advantage over traditional state-sponsored propaganda. Unlike established Russian media outlets such as RT and Sputnik, which faced swift sanctions from the West following the invasion of Ukraine, these covert campaigns "allow for a level of… plausible deniability that complicates counter-influence efforts," according to Sophie Williams-Dunning, a cyber and tech researcher at the Royal United Services Institute think tank. This deniability makes it significantly harder for Western governments and intelligence agencies to pinpoint responsibility and formulate effective countermeasures.

Further research has shed light on the interconnectedness of these operations. Academics at Clemson University have linked a separate network, identified by Microsoft’s Threat Analysis Centre as Storm-1516, to former operatives of the Kremlin’s notorious "troll factory." This facility was famously run by Yevgeny Prigozhin, the late leader of the paramilitary Wagner group. An upcoming study, reviewed by the BBC, provides a stark illustration of the velocity at which fake news now travels across social media platforms. Researchers observed that whenever the Storm-1516 campaign disseminated a false narrative, such as alleging that Ukrainian President Volodymyr Zelensky was "corrupt," that narrative rapidly permeated approximately 7.5% of all discussions concerning the Ukrainian president on the X platform (formerly Twitter) within the following week. "That is something any marketing company would be proud of," remarked Darren L. Linvill, one of the authors of the study, underscoring the alarming effectiveness of these AI-supercharged disinformation tactics. The convergence of advanced AI capabilities with well-established disinformation networks presents a formidable and evolving challenge to global information integrity and democratic processes.