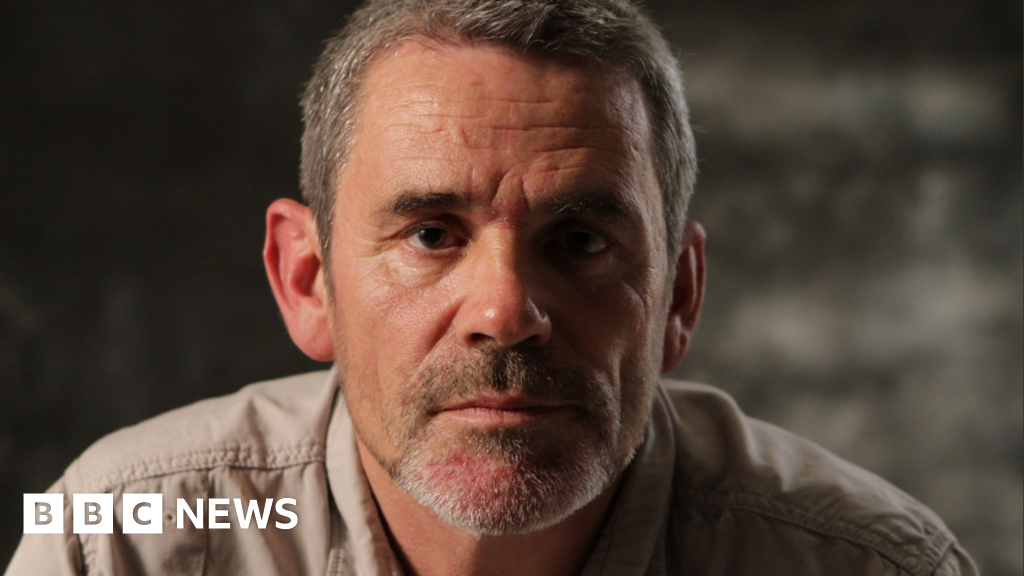

However, the announcement has been met with strong criticism from suicide prevention charities, who express concerns that the measures could inadvertently cause more harm than good. Andy Burrows, chief executive of the Molly Rose Foundation, a prominent suicide prevention charity, voiced his reservations, stating, "This clumsy announcement is fraught with risk and we are concerned that forced disclosures could do more harm than good." He elaborated on these concerns, emphasizing that while "every parent would want to know if their child is struggling," these "flimsy notifications" are likely to leave parents feeling "panicked and ill-prepared to have the sensitive and difficult conversations that will follow." Burrows’ critique highlights a potential disconnect between Meta’s stated intentions and the practical implications for families navigating such delicate situations.

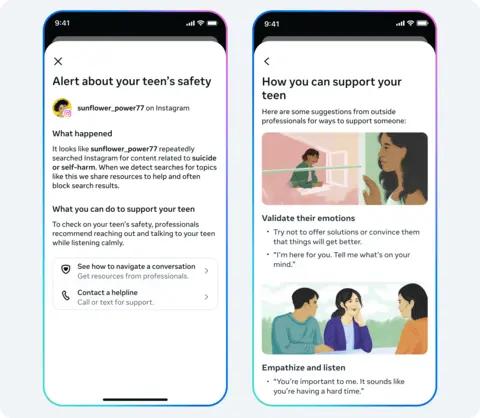

Meta, in response to these criticisms, asserts that the alerts will be accompanied by expert resources designed to assist parents in facilitating constructive conversations with their children. The company stated, "Meta says the alerts to parents about their child searching for suicide and self-harm material within a short space of time on Instagram will also be accompanied by expert resources to help them navigate difficult conversations with their child." This suggests an attempt by Meta to provide a more holistic support system, acknowledging the sensitive nature of the information being shared. However, the Molly Rose Foundation remains unconvinced, citing its own research published in September that indicated Instagram still "actively" recommends harmful content related to depression, suicide, and self-harm to "vulnerable young people." Burrows further argued, "The onus should be on addressing these risks rather than making yet another cynically timed announcement that passes the buck to parents." This directly challenges Meta’s assertion of its protective measures and places the responsibility for mitigating harm squarely on the platform itself.

Meta has refuted the findings of the Molly Rose Foundation, stating that the charity’s research "misrepresents our efforts to empower parents and protect teens." This public disagreement underscores the ongoing tension between social media companies and advocacy groups regarding the effectiveness of safety measures for young users. The alert system is scheduled for an initial rollout in the United Kingdom, the United States, Australia, and Canada, with plans to extend its reach to other parts of the world later in the year. This phased approach allows Meta to refine the system based on initial user feedback and technical performance before a broader global deployment.

The new alerts are an extension of Instagram’s existing efforts to protect its younger demographic, which include actively hiding content related to suicide or self-harm and blocking searches for harmful or dangerous material. The intention behind the Teen Accounts experience is to provide parents with greater insight into their child’s online activities, particularly when there are signs of a significant shift in their behavior or search patterns on the platform. These alerts will be delivered through various channels, including email, text messages, WhatsApp, and directly within the Instagram app, depending on the contact information Meta has on file for the family. This multi-channel approach aims to ensure that parents receive the notifications promptly and through their preferred communication methods.

Meta acknowledges that the alert system, which is informed by its analysis of user search patterns, may occasionally trigger a notification even when there is no genuine cause for concern. The company has stated that the system is designed to "err on the side of caution," prioritizing the potential safety of young users. This acknowledges the inherent limitations of algorithmic detection and the need for a sensitive approach. Furthermore, Meta indicated that in the coming months, it plans to extend similar alert capabilities to encompass discussions about self-harm and suicide with AI chatbots on Instagram. This reflects a growing awareness of the increasing reliance of children on artificial intelligence for support and information, and the need to extend safety measures to these emerging forms of interaction.

The implementation of these new features comes at a time when social media companies are facing mounting pressure from governments globally to enhance the safety of their platforms for children. Regulators and lawmakers are intensifying their scrutiny of the business practices of major technology companies, particularly concerning their engagement with young users. This heightened regulatory environment is a significant factor driving the development and deployment of such safety features. The digital landscape is continuously evolving, and with it, the challenges of protecting vulnerable users. The debate over the efficacy and ethical implications of these parental alerts is likely to continue as platforms grapple with the complex task of balancing user privacy with the imperative of child safety. The proactive notification system represents a significant step, but its ultimate success will depend on its implementation, the resources provided to parents, and its impact on the well-being of young users. The ongoing dialogue between tech companies, advocacy groups, and policymakers will be crucial in shaping the future of online safety for teenagers.