This stance is not a solitary one. The foundation established in Molly’s honour, the Molly Rose Foundation, stands united with a coalition of prominent children’s charities and online safety organisations. Together, they have issued a joint statement unequivocally opposing a social media ban for minors, highlighting a deep-seated concern that such a move would be counterproductive and potentially harmful. Their collective expertise suggests that a blunt instrument like a ban could inadvertently push vulnerable young people towards less regulated corners of the internet, making them harder to safeguard.

The debate around an under-16 social media ban has gained significant traction in political circles, especially after Australia implemented a similar measure in December, reportedly blocking around 550,000 accounts belonging to underage users on platforms like Meta’s Facebook and Instagram. Prime Minister Sir Keir Starmer has indicated that "all options are on the table" for the UK, refusing to rule out following Australia’s lead. This week, the House of Lords is poised to deliberate on proposals for a more nuanced ban, potentially incorporating an amendment into the Children’s Wellbeing and Schools Bill. Many Labour MPs and officials anticipate the UK government will indeed mirror Australia’s decision, with several other European nations also actively considering comparable legislation. Health Secretary Wes Streeting recently expressed his support for a ban, while Conservative leader Kemi Badenoch affirmed she would introduce one if her party were to win the next general election, likening social media spaces unsuitable for children to nightclubs.

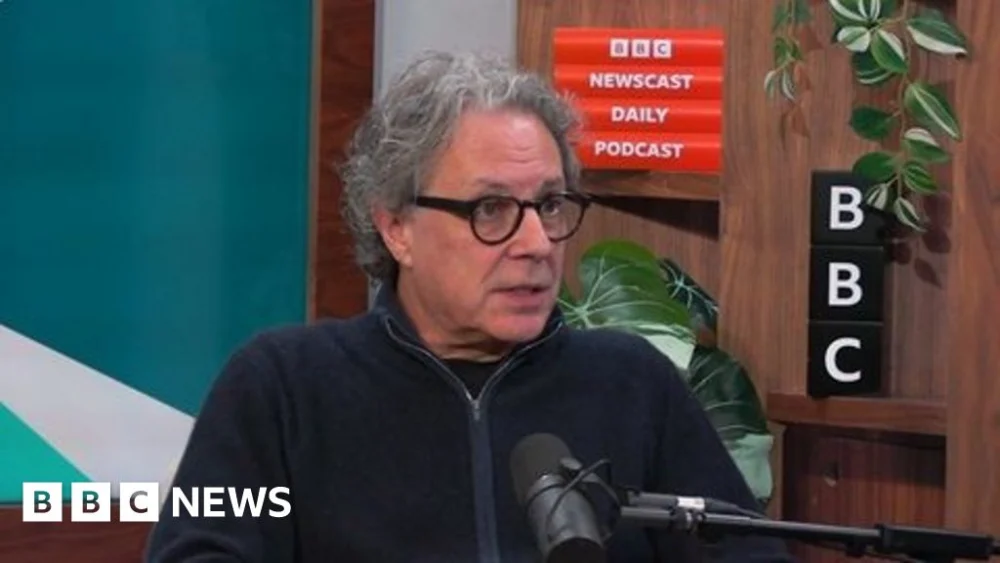

However, Ian Russell, a tireless campaigner for improved online protections for children since Molly’s death in 2017, expressed profound dismay at the way politicians have, in his view, politicised this deeply sensitive issue. "Many of them have said things like: ‘this is not something that should be a party political issue’," Russell noted, reflecting the sentiment among bereaved families who feel "horrified" by the perceived opportunism. His core argument remains steadfast: the emphasis should be on empowering and enforcing the legal frameworks already established, rather than introducing new, potentially flawed, legislation.

Russell voiced significant apprehension regarding the "unintended consequences" that such a ban could unleash, asserting that it would "cause more problems than it solves." He believes the fundamental issue lies with "companies that put profit over safety," a systemic problem that he feels is finally on the cusp of changing. "That has got to change – and I don’t think that we’re that far away from it changing," he stated, conveying a sense of frustration that this debate about bans is resurfacing now. He insists that building upon existing regulatory structures is a far more effective strategy than implementing "sledgehammer techniques" that could disrupt vital online support networks and drive young people to less visible, and thus less safe, online environments.

An inquest in 2022 famously concluded that social media content contributed "more than minimally" to Molly’s death, a landmark ruling that underscored the profound dangers of unregulated online spaces. It spurred the creation of the Molly Rose Foundation, a suicide prevention charity, which, alongside esteemed organisations such as the NSPCC, Parent Zone, and Childnet, has articulated its strong opposition to a blanket ban. In their comprehensive joint statement, these bodies unequivocally labelled a ban as the "wrong solution." They warned that it would "create a false sense of safety that would see children – but also the threats to them – migrate to other areas online." The statement continued, "Though well-intentioned, blanket bans on social media would fail to deliver the improvement in children’s safety and wellbeing that they so urgently need."

Instead of this "blunt response," the signatories advocated for a "broader and more targeted" approach. This includes the robust enforcement of existing laws to ensure that social media sites, personalised games, and AI chatbots are not accessible to children under 13. Furthermore, they called for all social media platforms to implement evidence-based blocks for features identified as risky for children of varying ages. The statement also urged the government to strengthen the Online Safety Act, a landmark piece of legislation, to compel online companies to "deliver age-appropriate experiences." Drawing a pertinent analogy, it suggested: "Just as films and video games have different ratings reflecting the risk they pose to children, social media platforms have different levels of risk too and their minimum age limits should reflect this."

Anna Edmundson, the NSPCC’s head of policy, further elaborated on the crucial role social media can play in young people’s lives. Speaking on BBC Breakfast, she highlighted that social media can be "vital" for children, offering "fun and connection, particularly for children and young people who are isolated for all sorts of reasons, or perhaps they are neurodiverse." She added that it is also "really important for peer support and access to trusted sources of advice and help, including Childline." Edmundson reiterated that the long-term solution lies in improving the regulation of social media firms, ensuring that "children aren’t thrown off a cliff edge when they go into these spaces," rather than simply cutting off access.

The initial impact of Australia’s under-16 ban has yielded mixed reactions. While Meta reported blocking a substantial number of accounts, teenagers themselves expressed diverse responses. Some found a new sense of freedom and reduced pressure, while others reported little change in their online habits, potentially moving to alternative platforms or finding workarounds. This anecdotal evidence lends weight to Russell’s concerns about "unintended consequences" and the potential for threats to simply migrate.

Back in the UK, Sir Keir Starmer’s cautious observation of Australia’s experience suggests a careful weighing of options. Kemi Badenoch’s defence of a ban, using the analogy of nightclubs not being for children, underscores a perspective that views certain online environments as inherently unsuitable. However, this analogy doesn’t fully capture the complexity of online interactions, where content and algorithmic amplification, rather than just physical presence, are the primary concerns. The Liberal Democrats’ proposal, due for consideration in the Lords, offers a potential middle ground. Their plan envisions social media platforms being "graded like age ratings for films." Under this system, platforms utilising addictive algorithmic feeds or hosting "inappropriate content" would be restricted to users aged over 16, while sites featuring "graphic violence or pornography" would become adult-only. This approach aligns more closely with the "nuanced and targeted" solutions advocated by Ian Russell and the coalition of online safety experts, focusing on content and design features rather than a blunt prohibition of access.

The debate continues to highlight the complex challenge of balancing online safety with freedom of access and the potential benefits of digital connectivity for young people. Ian Russell’s unwavering advocacy, rooted in profound personal tragedy, serves as a powerful reminder that any solution must be thoughtful, comprehensive, and genuinely effective in safeguarding children, rather than simply offering a politically expedient but ultimately superficial fix. The ultimate goal, he insists, must be to compel platforms to prioritise safety over profit, creating an online world where children can explore, learn, and connect without being exposed to harmful content that undermines their mental wellbeing.

If you have been affected by the issues raised in this article, help and support is available via BBC Action Line.