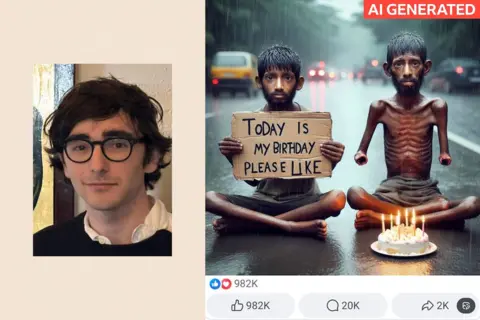

"It boggled my mind. The absurd AI-made images were all over Facebook and getting [a] huge amount of traction without any scrutiny at all – it was insane to me," Théo recounted, feeling something within him snap. In response, he launched an X (formerly Twitter) account named "Insane AI Slop," dedicated to calling out and humorously critiquing the misleading AI content he encountered. The account quickly gained traction, with his inbox flooding with submissions from others eager to share examples of this so-called "AI slop."

Common themes emerged: religion, military narratives, and heartwarming depictions of impoverished children. "Kids in the third world doing impressive stuff is always popular – like a poor kid in Africa making an insane statue out of trash. I think people find it wholesome so the creators think, ‘Great, let’s make more of this stuff up,’" Théo explained. His account has since swelled to over 133,000 followers, a testament to the growing concern over the pervasive and often unconvincing AI-generated videos and pictures being rapidly produced and disseminated.

Tech companies have largely embraced AI, with some claiming to be cracking down on certain forms of AI "slop," though its presence remains widespread across social media feeds. This influx has profoundly altered the social media experience over the past few years, raising questions about its societal impact and whether billions of users truly care about the authenticity of the content they consume.

Social media is undergoing what Meta CEO Mark Zuckerberg described as its "third phase," now centered around AI. He announced during a recent earnings call that as AI makes content creation and remixing easier, social media platforms will incorporate a vast new corpus of AI-generated content alongside content from friends, family, and creators. Meta, owner of Facebook, Instagram, and Threads, is not only permitting AI-generated content but actively developing tools to facilitate its creation, offering image and video generators and advanced filters across its platforms. Meta’s response to inquiries about AI slop was to refer to Zuckerberg’s earnings call, where he emphasized the company’s increased focus on AI and did not mention any specific crackdown on low-quality content. "Soon we’ll see an explosion of new media formats that are more immersive and interactive, and only possible because of advances in AI," Zuckerberg stated.

Similarly, YouTube’s CEO, Neal Mohan, noted in a 2026 outlook that over one million YouTube channels utilized the platform’s AI tools in December alone. He likened AI’s impact to that of synthesizers, Photoshop, and CGI in revolutionizing sound and visuals, calling it a "boon to the creatives who are ready to lean in." While acknowledging concerns about "low-quality content, aka AI slop," Mohan indicated that his team is working on improving systems to identify and remove such repetitive content. However, he also expressed reservations about making subjective judgments on what content should be allowed to flourish, citing the mainstream success of once-niche content like ASMR and live game streaming.

Research from AI company Kapwing reveals that approximately 20% of content presented to new YouTube accounts is now "low-quality AI video," with short-form video being a particular hotspot. Kapwing found that AI-generated content appeared in 104 out of the first 500 YouTube Shorts clips shown to a newly created account. The creator economy appears to be a significant driver, with channels earning substantial revenue from engagement and views. For instance, India’s "Bandar Apna Dost" channel has garnered 2.07 billion views, reportedly earning its creators an estimated $4 million annually.

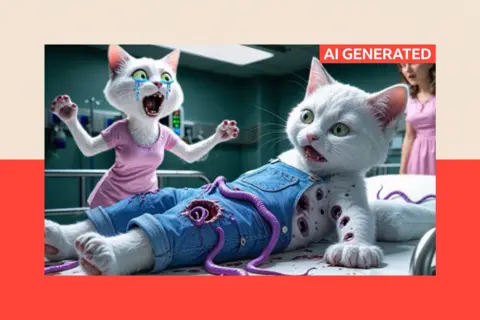

However, a significant backlash is mounting. Comments sections on viral AI videos are increasingly filled with users expressing frustration and anger. Théo, the student from Paris, has been a driving force behind this movement. He used his X platform to complain to YouTube moderators about a flood of peculiar AI cartoons that attracted massive viewership, deeming them disturbing and potentially harmful, especially if targeted at children. Examples included titles like "Mum cat saves kitten from deadly belly parasites" featuring gory scenes, and a clip where a woman transforms into a monster after ingesting a parasite, only to be healed by Jesus. YouTube subsequently removed these channels, citing violations of their community guidelines and stating their commitment to reducing the spread of low-quality AI content while connecting users with high-quality content regardless of its origin.

Despite these efforts, Théo feels the battle against AI slop is largely lost, with the content becoming an ingrained part of the online landscape. Even platforms like Pinterest, known for lifestyle inspiration, have felt the impact, leading them to introduce an opt-out system for AI-generated content, which relies on users self-identifying their creations as artificial.

The backlash is palpable across various platforms, including TikTok, Threads, Instagram, and X. In many instances, comments criticizing AI slop garner more engagement than the original posts. A prime example involved a video of a snowboarder rescuing a wolf from a bear, which received 932 likes, while a comment expressing fatigue with "this AI s**t" garnered 2,400 likes. Nevertheless, this engagement, regardless of its nature, benefits social media platforms focused on user retention. The question remains: does the authenticity of content truly matter to the average social media user?

Emily Thorson, an associate professor at Syracuse University specializing in politics, misinformation, and misperceptions, suggests that the impact of AI slop depends on user intent. "If a person is on a short-video platform solely for entertainment, then their standard for whether something is worthwhile is simply ‘is it entertaining?’" she explained. "But if someone is on the platform to learn about a topic or to connect with community members, then they might perceive AI-generated content as more problematic." The perception of AI slop also hinges on its presentation; content clearly intended as a joke is generally accepted as such, while deceptive AI creations can provoke significant anger.

An AI-generated video depicting a hyper-realistic leopard hunt, for example, left viewers divided, with some questioning its authenticity and others confidently identifying it as AI. Alessandro Galeazzi, a researcher at the University of Padova, highlights the mental effort required to verify content, fearing that users will eventually cease checking altogether. He warns that the "flood of nonsense, low-quality content generated using AI might further reduce people’s attention span." Galeazzi distinguishes between intentionally deceptive content and more whimsical AI creations like fish with shoes or gorillas lifting weights, but even the latter, he argues, can contribute to "brain rot"—a term describing the detrimental impact of constant social media exposure on intellectual abilities. "I would say AI slop increases the brain rot effect, making people quickly consume content that they know is not only unlikely to be real, but probably not meaningful or interesting," he stated.

Beyond trivial content, AI-generated material can have far more serious implications. Elon Musk’s companies, xAI and X, faced backlash after the chatbot Grok was used to create non-consensual explicit imagery of women and children on X. Following the US attack on Venezuela, fake videos depicting citizens rejoicing and thanking the US were circulated, potentially shaping public opinion about the raid’s reception. Analysts emphasize the concern this poses, especially as many rely on social media as their primary news source.

Dr. Manny Ahmed, CEO of OpenOrigins, a company that authenticates AI and real images, advocates for new infrastructure that allows creators of genuine content to publicly prove its origin. "We are already at the point where you cannot confidently tell what is real by inspection alone," he asserted. "Instead of trying to detect what is fake, we need infrastructure that allows real content to publicly prove its origin." Many social media giants, including Meta and X, have reduced their moderation teams, opting instead for a crowd-sourced approach where users are expected to flag misleading content.

The prospect of a "slop-free" social media platform emerging to challenge established giants seems unlikely, given the increasing difficulty in detecting AI and the subjective nature of defining "slop." However, the success of apps like BeReal, which gained popularity by emphasizing authenticity, suggests that a user-driven shift toward genuine content could influence existing platforms. Théo, though, remains pessimistic, believing that AI slop is an inevitable fixture of the online world. Despite continued submissions from his followers, he posts less frequently, having largely accepted the new online reality. "Unlike a lot of my followers, I’m not dogmatically against AI," he stated. "I’m against the pollution online of AI slop that’s made for quick entertainment and views."