Parents who have enrolled their teenagers in Instagram’s supervision tools will soon receive notifications if their child repeatedly searches for content related to suicide or self-harm on the platform. This marks a significant shift for Meta, the parent company, which will now proactively inform parents about their child’s engagement with harmful material, moving beyond its previous approach of solely blocking such searches and directing users to external support resources. The new alerts are set to roll out to parents and teens participating in Instagram’s Teen Accounts experience in the UK, United States, Australia, and Canada starting next week, with a global expansion planned for a later date.

However, the initiative has been met with sharp criticism from suicide prevention charities, most notably the Molly Rose Foundation, which warns that these measures "could do more harm than good." Andy Burrows, the chief executive of the Molly Rose Foundation, expressed strong reservations, stating, "This clumsy announcement is fraught with risk and we are concerned that forced disclosures could do more harm than good." Burrows elaborated on his concerns, suggesting that while "every parent would want to know if their child is struggling, but these flimsy notifications will leave parents panicked and ill-prepared to have the sensitive and difficult conversations that will follow." He highlighted the potential for these alerts to trigger immediate panic in parents, especially when they may not have the necessary emotional preparedness or information to effectively support their child.

Meta contends that these alerts will be accompanied by expert resources designed to assist parents in navigating these challenging conversations. Nevertheless, Ian Russell, the father of Molly Russell and founder of the Molly Rose Foundation, remains skeptical. Reflecting on the potential impact of such an alert, he told the BBC, "Imagine being a parent of a teenager and getting a message at work saying ‘your child is thinking of ending their life’… I don’t know how I’d react." He added, "And even if Meta say they’re going to supply support to that parent, in that moment of panic when you hear that about your child, I don’t think that’s a very sensible way of doing things."

A coalition of charities, including the Molly Rose Foundation, views Meta’s announcement as an implicit admission that more robust measures are needed to safeguard children on Instagram. Ged Flynn, chief executive of Papyrus Prevention of Young Suicide, while welcoming the notification system, argued that Meta is "neglecting the real issue that children and young people continue to be sucked into a dark and dangerous online world." Flynn shared the daily anxieties of parents who reach out to his organization, stating, "They don’t want to be warned after their children search for harmful content, they don’t want it to be spoon-fed to them by unthinking algorithms."

Echoing these sentiments, Leanda Barrington-Leach, executive director at the children’s charity 5Rights, asserted that for Meta to genuinely prioritize child safety, it "needs to return to the drawing board and make its systems age-appropriate by design and default." Burrows also pointed to previous research conducted by the Molly Rose Foundation, which indicated that Instagram continues to "actively" recommend content related to depression, suicide, and self-harm to "vulnerable young people." He concluded, "The onus should be on addressing these risks rather than making yet another cynically timed announcement that passes the buck to parents." Meta, however, has refuted these findings, stating they "misrepresent our efforts to empower parents and protect teens."

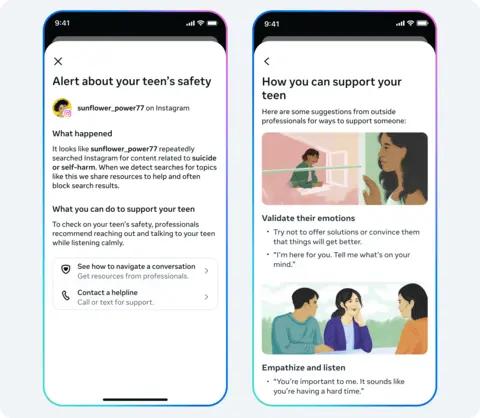

The new alerts from Instagram’s Teen Accounts are intended to signal to parents any sudden changes in their child’s behavior and search patterns on the platform. In a blog post, Meta explained that these measures build upon Instagram’s existing protective features, which include the concealment of content pertaining to suicide or self-harm and the blocking of searches for dangerous material. Alerts will be dispatched to parents via email, text message, WhatsApp, or directly within the Instagram app, depending on the contact information Meta has on record for each family.

Meta acknowledges that its new alerts, derived from an analysis of user search patterns, may occasionally trigger notifications even when there is no genuine cause for concern, emphasizing that the system will "err on the side of caution." Sameer Hinduja, co-director of the Cyberbullying Research Center, commented that such an alert would "obviously" be alarming for any parent to receive. However, he stressed the critical importance of the "quality and usefulness of the resources parents immediately receive to guide them through what to do next." Hinduja added, "You can’t drop a notification on a parent and leave them on their own, and it seems like Meta understands that."

Looking ahead, Instagram plans to extend similar alert functionalities to its AI chatbot in the coming months, recognizing that children are "increasingly turn[ing] to AI for support" and may discuss self-harm and suicide with these digital assistants. The social media industry is under increasing pressure globally from governments and regulators to enhance the safety of their platforms for young users. This heightened scrutiny extends to the business practices of major technology companies concerning their youngest demographic.

Additional reporting was provided by James Kelly.

If you have been affected by the issues raised in this article, help and support is available via BBC Action Line.